|

By Eli Epperson

Edited by Helena Rios In South Korea, a competition for television display dominance is raging. Its competitors, Samsung and LG, lead the world in the production of this pervasive technology field, which, by the year 2022, is expected to be worth close to $170 billion [1]. As mentioned in the prequel of this article, “Part 1: The OLED Explained,” in the market of display technology, the most popular type of television is the familiar LCD or liquid-crystal display. When it comes to LCD manufacturing, Samsung is the clear champion, comprising approximately 21% of the market, while its competitor LG controls only around 12% [2]. However, although the LCD may be the most popular type of television display, there is one emerging technology in particular that touts a number of advantages over it: the organic light-emitting diode display. While LG is lagging behind its rival in terms of LCD production, the company has emerged as the leader in the field of OLED display technology. The tech giant’s success in this field comes by virtue of an unexpectedly effective secret weapon, in the form of a number of electronics-based patents acquired years ago. With the numerous advantages that OLED displays have over LCDs, LG may now be in the position to gain control of the lucrative television market. Despite the relatively recent debut of the OLED television, the technology behind these ultra-thin displays dates back to the late 1980s [3]. Chemists Ching W. Tang and Steven Van Slyke developed OLED technology at the Eastman Kodak company twenty years before the release of the world’s first OLED television in 2007 [4]. In 2003, Kodak developed the technology into one of the earliest consumer products to have an OLED screen: theEasyShare LS633 digital camera, which featured a 2.2 inch OLED display. Also at this time, Kodak had begun to see declines in sales due to consumers’ preference for digital cameras over film ones. This shift in preference represented a kind of bittersweet dilemma for the company, since Kodak not only pioneered the first digital camera but also held a major stake in the success of film cameras, a longtime moneymaker [5]. While the market for OLED televisions was coming into focus, the camera company decided to set its sights elsewhere, given the tremendous investment that developing an OLED television would cost. In 2009, Kodak finally sold off its OLED intellectual property to LG in the form of approximately 2,200 patents and patent applications [6]. Many people thought nothing of this transaction. In fact, LG’s senior director of communications, Ken Hong, admitted that when LG bought the rights to Kodak’s OLED technology, “nobody else thought that was going to be a successful business” [7]. But the deal turned out to be incredibly fruitful for LG. This success did not stop the company from filing a lawsuit against Kodak a few years later, claiming that a number of Kodak’s patents actually belonged to one of LG’s subsidiaries. In the same year that the iconic camera corporation filed for bankruptcy [5], the two companies agreed on a settlement that cost Kodak three of the disputed patents [6]. For LG, the $100 million dollar price tag for Kodak’s patent treasure trove (plus some legal fees) was a small price to pay, considering this deal later drove Samsung to halt production of their own OLED televisions[7] [8]. The reason behind LG’s success lies in its development of Kodak’s white OLED technology, which offers a number of advantages over Samsung’s approach. Samsung’s OLED televisions used to employ a technique called direct emission, in which every pixel featured three subpixels, each emitting a separate primary color - red, green, or blue. The physics behind OLED pixels results in a different brightness associated with each primary color. For example, blue OLEDs pose a particular challenge, as they are not as bright as red or green pixels of the same size. To combat this, Samsung’s televisions featured blue subpixels that were larger than their red and green neighbors, improving the brightness output of the pixel as a whole. This more complicated OLED patterning scheme, among other factors, made Samsung’s direct emission displays more difficult to manufacture than LG’s displays, which used a method called WRGB. Pixels of WRGB displays use a single white OLED together with color filters producing the red, green, and blue subpixels. In addition, a fourth non-filtered subpixel emits white light, making WRGB televisions brighter than their direct emission counterparts [I]. But what was truly pivotal for LG’s success was the fact that displays featuring the WRGB design were half as expensive to manufacture as were Samsung’s direct emission models. Behind by years of research and losing money to their rival, Samsung quietly abandoned OLED televisions in 2014 [8]. While Kodak’s WRGB technology helped establish LG as the leader in OLED televisions, Samsung still enjoys a comfortable lead in LCD production. Sure, displays made with OLEDs beat everything else on the market in terms of key factors like picture quality, owing to their high contrast ratio, but LCDs remain hundreds of dollars cheaper, an appeal that most consumers cannot ignore. For you tech enthusiasts, the only affordable organics need not be the veggies at your local farmer’s markets though. Many smaller devices like smartphones, cameras, and laptops also feature OLED displays. Rest assured, LG will continue to develop groundbreaking OLED research to one day herald a new age of affordable OLED televisions. For the love of contrast, let’s hope that day comes soon. References <http://www.marketsandmarkets.com/PressReleases/display.asp> [1] <https://www.statista.com/statistics/267095/global-market-share-of-lcd-tv-manufacturers/> [2] - <https://www.oled-info.com/oled-pioneers-ching-tang-and-steven-van-slyke-were-inducted-2013-consumer-electronics-hall-fame> [3] <http://www.oled-info.com/sony-xel-1> [4] <http://www.independent.co.uk/news/business/analysis-and-features/the-moment-it-all-went-wrong-for-kodak-6292212.html> [5] <http://www.oled-info.com/global-oled-technology-says-they-prevailed-kodak-lawsuit-assigned-3-more-patents-and-pioneers-oled> [6] <https://www.cnet.com/news/lg-says-white-oled-gives-it-ten-years-on-tv-competition/> [7] <http://blog.gsmarena.com/samsung-stops-making-oled-tvs-due-lgs-dominance/> [8]

0 Comments

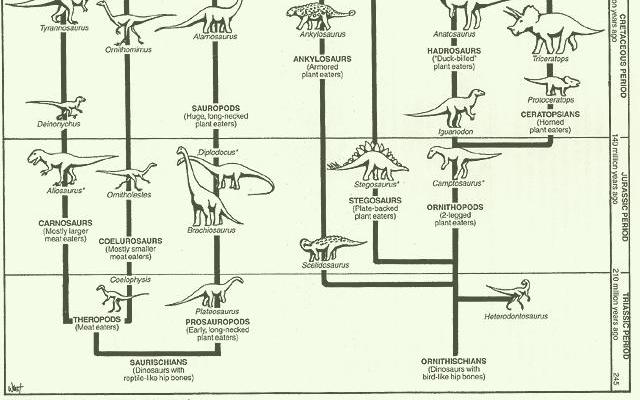

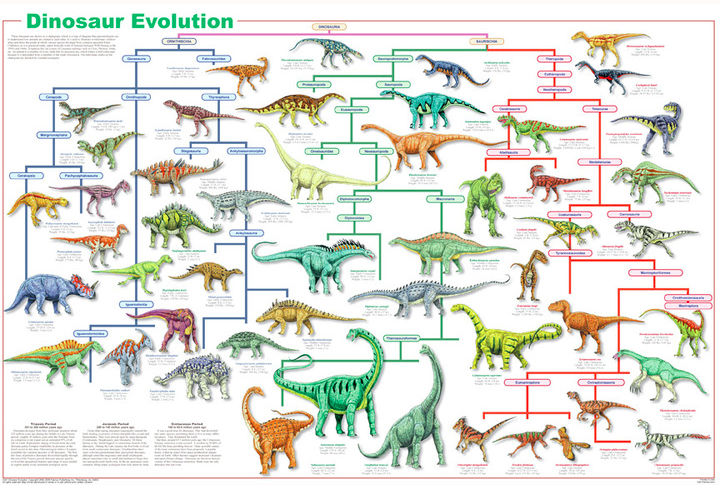

By Eva Sophia Blake Edited by Kim Chia For over a century, dinosaurs have been classified using the system created by the paleontologist Harry Seeley. Matthew G. Baron, University of Cambridge Ph.D candidate, however, hopes to redefine and reconfigure how scientists organize dinosaurs. His work has been supported and encouraged by David B. Norman and Paul M. Barrett, his two advisors. Seeley’s system, created in 1888, divided dinosaurs into two main categories: the bird-hipped (Orinthischia) and the lizard-hipped (Saurischia). The bird-hipped includes armored dinosaurs like the stegosaurs while the lizard-hipped category describes dinosaurs such as the tyrannosaurs. The two groups were believed to be completely distinct, without a shared ancestor between them, till around 1980. This assumption formed how paleontologists understood the evolution of dinosaurs: the division illustrated to them evolutionary pathways. Baron’s new system calls much of the work done on dinosaurs into question. Baron’s study originates in what is called a “sister-group” relationship between a group in each main category, indicating that the two categories are not as distinct as was previously believed. In light of this discovery, Baron suggests that the two categories should be replaced by different ones. Once Baron realized that the distinction was not as certain, he spent the following three years studying dinosaur fossils. His goal was to find better features to distinguish between dinosaurs that would eventually create a new family tree.

After researching, Baron came up with 457 potential characteristics to organize the species through a wide-ranging evaluation of dinosaurs in both time and space. Overall, 74 different groups were scored for the diagnostic features that Baron had identified. This data was than analyzed using the computer program TNT 1.5-beta to create and evaluate 32 billion potential family trees. TNT lends weight to Baron’s system because of its advanced statistical ability. Baron’s recently published study “A new hypothesis of dinosaur relationships and early dinosaur evolution” focuses on the family tree evaluated as most accurate. This classification connects the bird-hipped Orinthischia category with the sub-category of Saurischia called Theropods. Because of this connection, Baron suggests two different main categories: the Orinthoscelida (a combination of the Orinthischia and theropods) and the Saurischia. This new distinction greatly changes the make-up of the family tree, suggesting a very different evolutionary story. This new classification indicates that the Orinthischia and the theropoda evolved at a similar point in time and from a joint ancestor. Based on this new information, dinosaurs are suggested to have existed about 247 million years ago during the middle Triassic era. The new tree also implies that the original dinosaurs were omnivorous and had grasping hands—a distinct evolutionary advantage primarily seen in humans. This trait explains dinosaurs’ success in comparison to other species in the Jurassic era. Additionally, while many have previously assumed dinosaurs emerged in South America, the new classification suggests that the Northern Hemisphere was an equally likely origin. Baron suggests Scotland as a potential origin point because the creature Saltopus elginesis has many similar features in common with the early dinosaur Baron has recreated. While the new classification is statistically supported as the most likely because of the use of the TNT programming, many paleontologists remain unconvinced. It is unclear whether the scientific community will adopt Baron’s potential system or continue to use the one that has existed for over a century. Paul Sereno, a paleontologist at the University of Chicago who has been a proponent and modern adaptor of Seeley’s original classification system, does not believe the new system has any significant contributions because of its lack of new features and scoring. Baron responded to this criticism by noting that rather than reconfiguring the characteristics, he simply created a classification that was unbiased towards the historical one. Specifically, the system TNT does not have the same information of preexisting systems that a scientist would. Baron’s article has certainly laid the groundwork for more conversation about classification. What remains to be seen is how other paleontologists will respond and how new data may eventually change the proposal. By Mariel Corinne Tai Sander

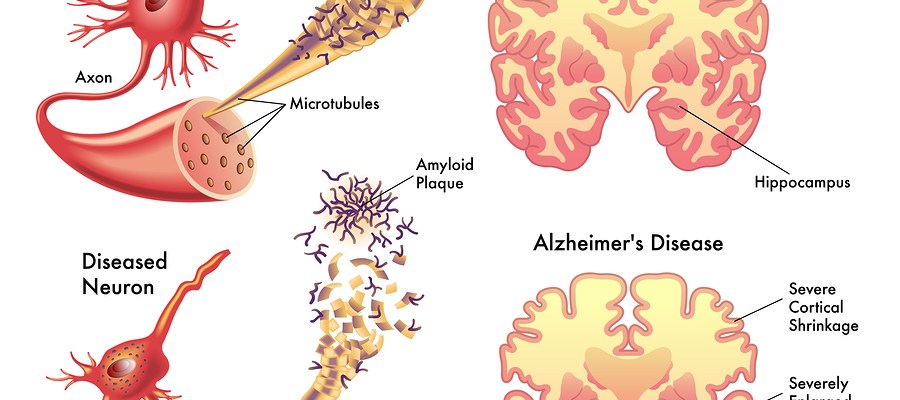

Edited by Kim Chia In 1907, at a conference in Tubingen, Dr. Alois Alzheimer described a curious disease characterized by “numerous small miliary foci…found in the superior layers…the storage of a peculiar material in the cortex” [1]. While researchers now know what those “small military foci” are—plaques of beta amyloid proteins in the brain—the reason why they form and lead to the neurodegenerative disease known as Alzheimer’s remains a mystery. Researchers at Harvard University have found a possible explanation for why these plaques form. Their theory is connected to the workings of our immune systems. They have found that as part of the innate immune system, amyloid proteins are present throughout the body and trap foreign microbes. Researchers are proposing that the same thing happens in the brain—when a microbe enters the brain via the blood-brain barrier, the immune system sends in beta amyloid proteins to stop the pathogen. This accumulation of protein forms the characteristic plaques of Alzheimer’s [2]. This theory implies that the plaques might be caused by infections in the brain. Researchers have found this hypothesis consistent with results from testing in individual neurons, yeast, roundworms, fruit flies, and mice. In one experiment the researchers inserted Salmonella bacteria into the brains of two groups of young mice: one that produced beta amyloid proteins and one that did not. They found that the brains of the mice were filled with beta amyloid plaques, engulfing the bacterium. The mice who couldn’t produce the protein had no plaques - but they also died more quickly from the infection [2]. This model of Alzheimer’s is further complicated by a study at the Weizmann Institute of Science in Israel. Michal Schwartz and her team gave mice PD-1 blockers, which kept their immune systems active and halved the amount of amyloid beta, then administered cognitive tests. They found that after the blockers, the mice scored better than they had before [3]. What these two studies show is that development of Alzheimer’s disease is intrinsically dependent on the immune system. However, it’s not clear whether it’s a result of an overactive or an underactive immune system. The study at Harvard would suggest it is the former - that is, an overproduction of amyloid beta proteins in the brain. Michal Schwartz’s, on the other hand, suggests the opposite - the immune system could play an essential role in clearing the brain of these protein clumps. Either way, the idea that the cause - and thus the cure! - for Alzhiemer’s lies within our own immune systems is a groundbreaking one. References [1] http://scienceblogs.com/neurophilosophy/2007/11/02/alois-alzheimers-first-case/ [2] https://www.nytimes.com/2016/05/26/health/alzheimers-disease-infection.html?_r=0 [3] http://www.pbs.org/wgbh/nova/next/body/immunotherapy-drugs-used-for-cancer-could-also-fight-alzheimers/ By Audrey Lee

Edited by Helena Rios Every day, over three billion people lose themselves in a virtual reality. Most of them will send a Snap to friends, like a selfie on Instagram, or react angrily to a rant on Facebook. They return to the physical world only so they may attend to necessities and responsibilities such as school, work, and sleep, before resuming their trance in cyberspace. Social media platforms are becoming increasingly popular as they enlarge and accelerate how we communicate. Since the launch of Facebook livea year ago, many of us have come to rely on the site to receive up-to-the-minute news and to watch live streams of monumental events. While many find Facebook to be a convenient and efficient news source, the ongoing controversyover its dissemination of fake news suggests that it may not be the most credible. The company is currently under fire for allowing misinformation to spread and for consequently misguiding its users’ perspectives and decisions. Although many people claim that it is Facebook’s responsibility to correct this issue, the real solution lies not within the company’s developers or algorithms, but within us. A key factor in how we develop as socially conscious individuals is how we experience, observe and reflect on real-world situations. As our awareness shifts from atoms to bits, we lose touch with our physical world and allow our consciousness to be influenced by our interactions in cyberspace. It is not surprising that the Internet and social media currently play dominant roles in our lives. When I received my first smartphone, I was instantly awestruck by the freedom I had to access the Internet from anywhere at any time. I no longer had to wait until I was in front of a computer to check my emails and read updates on world news. Now, I could receive reports and exchange messages on a connected mobile device almost immediately. Even when it comes to learning, much of what I wish to know comes from “just Googling it” quickly online. While the Internet has made it more efficient for us to search for answers in the vast sea of information, it has also made us adopt a more shallow way of thinking. Instead of delving deeply into topics and learning from experience, we often read the first few articles in a search engine and simply accept that their authors know more than we do. Particularly in areas with which we are not that familiar, we simply believe whatever we read on what looks like a credible source. This shallow surfing in place of contemplative thinking has come to dominate not only our Internet searches but also our understanding of ourselves. As social media networks pervade our daily lives, they not only affect the way we interact with others but also change the way we think and view ourselves in virtual and physical reality. In our lives on the screen, there are no limits to how many profiles we can create and how many background stories we can fabricate. Many people also tailor the descriptions of themselves to whatever would be popular and socially desirable online. For instance, Instagram posts can be easily rendered to attract more followers and headlines on digital news articles can be sensationalized to attract greater readership. While exaggerated media are not unique to the Internet, our online social networks have made it much easier to create and spread fake news like wildfire. These virtual identities together with our constant contact with misinformation shape the ways we think about ourselves in real life. Self-identity, which used to be built upon real-life experiences, observations, and deep thinking, is now based on virtual experiences that can be rife with false misinformation and shallow understandings. Although there is a tendency to point fingers at mainstream media platforms like Facebook and TV networks for media bias, it is important to realize that the onus is not on them to reform the way they operate. All social media and news media companies are simply doing what they’re meant to do: moving information across a global network regardless of whether the information is true or false. Ultimately, it is up to us as users to determine how much we allow our perceptions to be affected by these media. It is impossible for us to avoid the pervading effects of social media and the Internet in our society. However, we can balance their imact by maintaining a boundary between our internal awareness and external virtual influences. In the Internet Age, where information and misinformation can be easily dispersed, it is up to our self-consciousness to determine the stability of our inner lives. References http://newsroom.fb.com/news/2016/04/introducing-new-ways-to-create-share-and-discover-live-video-on-facebook/ https://www.theguardian.com/technology/2016/dec/12/facebook-2016-problems-fake-news-censorship http://web.mit.edu/allanmc/www/mcluhan.mediummessage.pdf http://www.factcheck.org/2016/10/did-the-pope-endorse-trump/ |

Categories

All

Archives

April 2024

|