Protecting Our Defenses: Our Extensive Use of Antibiotics Reduces the Diversity of the Microbiome4/27/2015 By Tiago Palmisano

Edited By Arianna Winchester One of the greatest accomplishments of civilization is our ability to purposefully modify the natural world. Scientific research has taught us that we are surrounded by microorganisms too small to see with the naked eye. Of the hundred trillion cells that constitute the average human body, only ten percent of them are our own human cells. The other ninety percent are microorganisms such as bacteria or viruses. This is what we call the human microbiome. Much of modern medicine is based around controlling the microbiome to encourage the health of our bodies. Specifically, we are able to regulate the activity of the bacteria in the microbiome through a class of drugs known as antibiotics. Luckily, most of the bacteria in the environment are either beneficial to our bodies or don’t affect us, but a few species do pose a threat to human health. Antibiotics are drugs that allow us to selectively destroy bacteria without damaging human cells. Alexander Fleming discovered the first of these revolutionary drugs, Penicillin, in 1928. Since then, dangerous illnesses caused by bacteria, such as tuberculosis and syphilis, are now easily curable and have been almost completely eradicated. However, the use of antibiotics is a double edged sword; recently, certain risks have become associated with antibiotic use. Since antibiotics destroy some bacteria of our microbiome, we are inadvertently encouraging the growth of new strains of bacteria which will develop immunity to our medicine. Over time, bacteria evolve to survive the effects of our antibiotics. For example, extensive use of the antibiotic Methicillin has led to diseases such as MRSA, which stands for methicillin-resistant Staphylococcus aureus. Patients who get MRSA have become infected with a strain of bacteria that has acquired immunity to the drug we used to kill it. By using our antibiotic treatments, we have indirectly created a dangerous disease. Bacterial evolution is much faster than the rate at which we can synthesize new drugs, and so the rise of antibiotic resistant (ABR) microbes is a significant problem for the medical community. Gonorrhea, which used to be an easily treatable disease, may soon become completely resistant to current antibiotics. We cannot deny that antibiotics save lives and increase our standard of living, but the recent emergence of ABR infections demands that we learn to use them more responsibly. Newborns, for instance, are normally given a preventative treatment of antibiotics after birth to protect against possible infections. However, these drugs diminish the initial colonization of microbiome bacteria in the infant’s gut that could provide key physiological functions later in life. Research has shown that narrowing the diversity of a newborn’s microbiome can result in a higher chance of developing allergic asthma and other inflammatory diseases in the future. Furthermore, recent studies of an isolated South American tribe demonstrate the long-term effects of the potential overuse of antibiotics. A group of Yanomami Amerindians in southern Venezuela offers an ideal model of the undisturbed human microbiome, since the tribe has been isolated for more than eleven thousand years. Scientists found that the diversity of bacteria in oral, skin and fecal samples from the Yanomami Amerindians was significantly higher than the bacterial diversity of Western people. From this, we can infer that the long-term exposure to Penicillin and other similar drugs could inhibit the bacterium-human relationship. Interestingly, the microbiome of the isolated tribe contained some antibiotic-resistant bacteria, even though none of the Yanomami Amerindians had ever taken an antibiotic drug. The presence of these protective bacteria is most likely a result of exposure to naturally occurring antibiotic microbes in the soil. This unexpected finding suggests that a small amount of antibiotic resistance naturally occurs in the human microbiome. The fact that certain amounts of ABR organisms normally occur may provide an explanation for the extreme pace at which resistant diseases have arisen in Western civilization. Our substantial use of antibiotics may have poured gasoline onto a pre-existing fire, which allowed the naturally occurring resistant bacteria to spread throughout human society. The argument presented here is not against the use of antibiotics; it is simply a reminder to use our antibiotics responsibly. The advances of modern medicine have undoubtedly improved our defenses against disease, and we should not abandon those treatment options. However, we must be mindful of the very real threat posed by the rise of ABR microbes. To reduce this threat, the global community should take measures to control the amounts of antibiotics used in commercial industry. Comprehensive guidelines for estimating the risk of resistant bacteria should also be established. If we fail to use antibiotics responsibly, they in turn will eventually fail us.

0 Comments

By Ian Cohn

Edited By Timshawn Luh This past week, a team of scientists from Harvard University, Leiden University, and Kobe University announced what has been since deemed a very exciting discovery—for the first time, the presence of complex organic molecules had been observed in an infant star system. Detected through the use of a complex telescope and detector known as the Atacama Large Millimeter/submillimeter Array, scientists were able to detect the presence of acetonitrile (CH3CN) in a protoplanetary disk surrounding a one-million-year-old star 455 light-years away from our solar system. This provides yet another clue that our Earth, the only planet currently known to harbor life, may not be as special as we’d like to think. Indeed, while this is the first time a complex organic (carbon-containing) molecule has been observed in a protoplanetary interstellar environment, the presence of complex organic molecules in space is nothing new. Back in 2011, researchers at the University of Hong Kong showed that stars at different phases in their life cycles could produce complex organic compounds (much more complex than acetonitrile) and eject them into space. Other examples of interstellar organic materials exist. Clouds of ethanol, large carbon based molecules called fullerines, and complex organic molecules like pyrene have all been shown to exist in space. This new discovery, then, just adds to the already mountainous pile of evidence that organic chemistry, the basis for life, is not unique to Earth. The easy takeaways: we aren’t anything special, and life may not be some kind of sacred anomaly. Nonetheless, the question remains—if the chemistry exists, does the biology exist? Or even, how do we get from the chemistry to the biology? In other words, it’s still extremely unclear how the natural world progressed from the isolated reactions of organic chemistry to the complex, biotic (life-containing) systems which characterize biology. Abiogenesis, the natural process of life arising from nonliving matter, remains one of the least understood and most thought provoking questions in modern science. Several theories exist for how life came to exist. One of the more well-known is commonly referred to as the “primordial soup hypothesis,” which posits that the environment of the early Earth had an atmosphere which encouraged the natural synthesis of various organic molecules, and that these reactions eventually increased in complexity until life arose. While this seems like somewhat of a hand-wavy explanation, it does have an important history to it. In 1952, Stanley Miller and Harold Urey took a mixture of gases which would have been present in the atmosphere of the early Earth, simulated other physical and chemical conditions of this environment, and showed that these gases in the “primitive atmosphere” naturally led to the synthesis of over twenty different amino acids, the basic building block of proteins. Several other models beyond that which was proposed by the Miller-Urey experiment exist, with some building upon the experiment’s principles and others defying them. For instance, the panspermia hypothesis posits that meteoroids and asteroids distributed microscopic life through earth, though this just defers the burden of abiogenesis to another region of space, rather than offer a satisfactory answer to how life first arose. Other theories, classified as “metabolism first” hypotheses, suggest that the chemical reactions underlying metabolism developed first and that life followed, perhaps through the natural formation of protocells (primitive spheres of lipids) to "section off” these metabolic reactions. Yet another set of theories suggest that life began at deep sea vents, known as hydrothermal vents, where hydrogen-rich fluids erupt from the bottom of the ocean and create an environment that increases the concentration of organic molecules, perhaps leading to life. Just as the origin of life still remains an open question, so too does the question of whether Earth is the only place in the Universe to truly harbor life. Indeed, mounting evidence suggests that the chemical reactions which underlie biology may not be unique to Earth, but the presence of these reactions in biological systems in places other than Earth remains yet to be discovered. By Jack Zhong

Edited By Josephine McGowan Alzheimer’s disease is the 6th leading cause of death in the US, and yet, it has no known cures, prevention methods, or approaches to slow down the progression of disease. Causing degeneration in the brain, Alzheimer’s disease has common symptoms that include: dementia, memory loss, decline in speech, and confusion. The disease pathology is gradual, with seven major stages. Its victims include former president Ronald Reagan and Nobel laureate physicist Charles Kao, and the disease mainly affects people 65 years and older. Recently, a new drug, Aducanumab (BIIB037), has yielded promising results in clinical trials. In attempting to treat Alzheimer’s disease and other neurodegenerative disorders, neuroscientists and doctors struggle with a wide gap of knowledge in neuroscience. Neuroscientists understand well how a neuron works on a cellular level, and psychologists have to a certain extent clarified how humans behave. Yet, we do not understand well how the signals of millions of individual neurons throughout the brain integrate together to cause behavior. Similarly, we do not know what goes awry in neural circuitry to cause neurodegenerative diseases, even if we could detect abnormalities in individual neurons. Sometimes, a part of the brain can be removed with little consequence, but a slight mutation in the genes can cause devastating diseases. We simply don’t understand exactly how changes in neurons lead to changes in behavior or brain functions. Given our lack of knowledge in this respect, attempting to treat neurodegenerative diseases is like attempting to treat infectious diseases without knowing how viruses alter organ functions. Though there are many hypotheses on the causes of Alzheimer’s disease, scientists are still debating what the definitive cause might be. Most of today’s treatments are based on the Cholinergic Hypothesis, which proposes that Alzheimer’s disease is caused by a reduction in acetylcholine, a molecule used in signaling between neurons. However, most drugs used to treat acetylcholine deficiency have not yet been proven to be effective in treating Alzheimer’s. Alternatively, the Amyloid Hypothesis suggests that amyloid beta (Aβ) deposits on the outside of neurons cause the disease. In support of the Amyloid Hypothesis, scientists note that the gene for the amyloid precursor protein (APP) is on chromosome 21, and many Down syndrome patients (with an extra chromosome 21) exhibit Alzheimer’s disease by age 40. Mice with mutated APP genes exhibit amyloid plaques and Alzheimer’s like symptoms. Also, APOE4 is a defective form of a protein that normally breaks down, and people with APOE4 gene are at risk of Alzheimer’s disease. There are still many other hypotheses on the causes of Alzheimer’s disease not mentioned here. When scientists at the biotech company Neurimmune developed Aducanumab, they sought to tackle the disease based on the Amyloid Hypothesis. Aducanumab is an antibody derived from healthy, aged donors without Alzheimer’s disease. The scientists figured that since the donors’ immune systems were resistant to Alzheimer’s disease, antibodies from these healthy patients could be turned into treatments. This process of turning antibodies from healthy individuals into therapeutics is called "reverse translational medicine." When determining the mechanism of these antibodies, the scientists found that Aducanumab targets a special recognizable region on the Aβ. Antibodies work by selectively binding to recognizable regions of pathogens called epitopes, and they trigger an immune response to destroy the pathogen. Once in the brain, Aducanumb binds to Aβ that forms aggregates, which is believed to cause Alzheimer’s disease, but the drug leaves single Aβ untouched. Scientists testing the effectiveness of Aducanumab were greeted with promising results. There are many stages before drugs are released onto the market (Figure 4). Before human testing, scientists dosed mice with mutant APP genes with Aducanumab for thirteen weeks, and all sizes of the Aβ plaques were reduced. After the drug was found to be safe for human use, Biogen Idec started PRIME, a 2012 multi-dose study involving 166 people with Alzheimer’s disease. On March 20, 2015, analysis of the first data was presented. Aducanumab reduced amyloid deposits in 6 regions of the cerebral cortex of the brain. Large effects were observed after 1 year of dosage. The highest dose seems to reduce cortical amyloid close to normal detected levels. Further, cognitive tests administered to patients suggest that Aducanumab reduce cognitive decline in a dose-dependent fashion. PRIME is an ongoing study that will last until 2016. The cure for Alzheimer’s disease may be around the corner if Aducanumab continues to yield encouraging results. Regardless of the eventual conclusion on Aducanumab, neuroscientists must continue to hunt down the cause of Alzheimer’s. Most importantly, neuroscientists need to work slowly to close this gap of knowledge in Alzheimer’s on a neuron-level, which can lead to a revolution in our understanding of the brain and treatments of neurological disorders. To achieve this hypothetical goal would mean eventually elucidating the etiology of this disease, as well as finding ways to prevent its progressive damage. By Kimberly Shen

Edited by Hsin-Pei Toh If I still feel the smart of my crushed leg, though it be now so long dissolved; then, why mayst thou, carpenter, feel the fiery pans of hell for ever, and without a body?” These are the words famously uttered by Herman Melville’s Captain Ahab as he bitterly reflects on the loss of his leg, emphasizing the disconnect between body and brain. Indeed, his words implicitly allude to the phantom limb phenomenon, which refers to the sensation that a missing limb is still connected to the body and moving as it normally does. Like Captain Ahab, many individuals who experience the phantom limb phenomenon can attest to the fact that although body and brain work together to create the perception of physical self, there is still sometimes a disconnect between the sensory experiences and the brain’s perceptions. Vilayanur Ramachandran, a researcher who specializes in behavioral neurology and visual psychophysics at University of California, San Diego, developed a study to delve into this mystery. One of Ramachandran’s patients complained that he was experiencing painful cramps in his phantom arm because it felt as though his phantom hand was clenched so tightly that his fingernails were digging into his palm. Although the patient was well aware that his arm had been amputated, there was still an ongoing divide between his sensory experiences and what he already knew. To treat his patient, Ramachandran put a mirror into a cardboard box and asked the patient to put his existing hand into the box, next to the mirror. Thus, when the patient looked into the box, he saw the reflection of his existing hand, which served as a visual substitution of his phantom hand. Ramachandran then instructed the patient to practice clenching and unclenching his hand while looking at the mirror and pretending that he was looking at a reflection of his phantom limb. With the help of Ramachandran’s ingenious illusion, the patient reported that the phantom sensations of pain disappeared after two weeks. The results of this study attest to the truly intricate relationship between physical and mental that contributes to the phantom limb experience, for neither physical (that the inflammation of severed nerves causes this condition) nor psychological explanation (that experiencing sensations in a phantom limb is mental denial of the fact that the limb is no longer there) alone captures the full picture. After all, the brain must merge the physical experiences of the body with the cortical map of the body, otherwise known as the mental image. Thus, as the body changes over time, the cortical map is also expected to change. However, with the phantom limb phenomenon, the cortical map fails to match the physical changes the individual has already undergone, causing the person to experience sensations in a nonexistent limb. As a result, this phenomenon ultimately raises the question: If the sensations of a limb can continue long after the limb is no longer there, where is the line between an individual’s physical self and an individual’s perception of self? By Julia Zeh

Edited by Arianna Winchester Recent deep-sea expeditions have shown us that we don’t know much about the creatures lurking beneath the surface of the ocean. We see organisms that look and behave completely differently from creatures found on the surface, probably because the deepest parts of the ocean are so different from the conditions closer to us. For example, because these parts of the ocean are so dark, deep-sea creatures often lose their bright coloring to adapt to their shadowy environment. Some of these creatures look so strange and alien that they are almost the stuff of nightmares! The deepest part of the Mariana Trench is called the Challenger Deep, found in the western Pacific Ocean, just east of the Philippines. At Challenger Deep, the ocean floor lies seven miles below the ocean’s surface. To put this depth into perspective, the Challenger Deep is over a mile deeper than Mount Everest is tall. Very few people have ever travelled to the bottom, a place of high pressure, low temperature, and total darkness. Though we may assume the extreme conditions in Challenger Deep make it unable to sustain life, we find that the sides of the trench are home to many diverse life forms. The life we find hidden in the depths of the ocean are quite different than the organisms that live closer to the surface. Most notably, an expedition in 2014 discovered a new species of snailfish that is able to survive at a lower depth than any other species of fish previously identified. This variety of snailfish was found over five miles deep in the Mariana Trench. The researchers described this “superfish” as appearing completely different from any other species of fish that the researchers had ever seen before. It has wing-like fins on its side, an odd-shaped head, and looks very fragile and tissue-like as it glides through the water. This new fish lies at the boundary of a theoretical limit, beyond which scientists believe the extreme pressure would make it impossible for any fish to survive. Though the scientists were able to attract these snailfish to a camera, they couldn’t catch any specimens for further study, which is necessary for it to be named a new species. Alas, the snailfish remains a mystery. Also caught on film were another type of very rare organisms named supergiant amphipods. These amphipods are described as shrimp-like crustaceans that are found in abundance in the Mariana Trench. During James Cameron’s recent expedition to Challenger Deep in 2012, the researchers observed supergiant amphipods as large as one foot. Consequentially, these organisms were termed “supergiant” since normal amphipods found closer to the surface are usually about the size of the top of a thumb, On land, this would be like seeing a caterpillar grow to the size of a small cat. With each new research trip to extreme depths of the ocean, video footage and photographs of deep-sea creatures are brought back that send shivers up our spines, both at the eerie physical appearance of the creatures and the intrigue regarding something about which so little is known. Watch video footage of creatures filmed in the depths of the Mariana Trench here: https://www.youtube.com/watch?v=6N4xmNGeCVU By Jack Zhong

Edited By Hsin-Pei Toh While we are nowhere close to creating a human brain, we humans have successfully created artificial ones in the form of computers. This development occurred in just a little over 50 years after Professor Alan Turing created the precursor to the first computers to break the Enigma encryption used by Nazi forces in their military communications during World War II. Indeed, the rate of progress in computer technology is increasing exponentially. For instance, computer chips are doubling in performance every two years, roughly in accordance with Moore’s law. The next step in computer technology is creating Artificial Intelligence (AI). In fact, we already possess AI in the rudimentary sense. In video games, the computer operates units which behave intelligently in opposition to the player. While driving, we use GPS to automatically route the journey based on our preferences. These AI are specialized in a particular task and are called “weak AI.” Weak AI also specializes in a variety of tasks including calculations and repeated actions. However, AI that scientists are working towards will have “general intelligence,” meaning they can perform any task that humans can perform. These machines, known as “strong AI,” far exceed the capabilities of weak AI. Creating strong AI is a controversial topic. Many of the world’s leading science and technological figures, including Stephen Hawking and Elon Musk, have expressed concerns about the effects of its creation. This is not a new concern, given films such as iRobotwhich have explored the notion of humanity’s demise due to AI. The argument is that strong AI may find humans threatening to their survival. After all, we expect them to altruistically serve our purposes while we constantly roll out new machines to replace the “outdated” models. Hawking and Musk argue that it would be highly plausible for AI to wipe out humans or subject us to strict control. As unappetizing as it sounds, the intelligence of strong AI could eventually exceed that of humans. Weak AI have already surpassed humans in many specialized tasks. For instance, the chess-playing computer Deep Blue defeated Gary Kasparov, one of the greatest chess grandmasters of all time. While this does not prove that a chess-playing machine necessarily trumps its human counterpart, it does prove that it is capable of such feats. Furthermore, evolution could occur at a much faster rate in machines than in humans. If AI were able to combine the learning, synthesizing, and planning abilities of humans with its raw processing power, it is theoretically feasible for their intelligence to far exceed that of humans. Of the many factors complicating human control over machines, different ways of “thinking” stands as one of the main obstacles. Take the example of the chess-playing computer. Typical chess-playing software may have the computer analyze all possible moves and outcomes to determine the best one. Meanwhile, a typical human player would continuously analyze a few appealing moves before choosing, and there is no certainty that any of the moves analyzed is the best one overall. Humans simply do not have the processing power to consciously analyze all possible moves and variations in a short time. An AI could be programmed to think in ways humans cannot, since computers have much faster processing power for some tasks. The “thinking” strategy of an AI could be updated accordingly to fit its needs. Yet, the thinking of AI could be unpredictable or incomprehensible, especially in regards to ASIs (Artificial Super Intelligences). For instance, an ASI programmed to protect humans may find human activity self-destructive and try to imprison us for safety, as did the supercomputer in iRobot. The reasoning and solutions proposed by an ASI may be too complex for our understanding; we would be like spiders trying to understand who built the house that it lives in. In these situations, it could be hard or even impossible to ensure that the interest and thinking of the ASI would align with our own interests. In addition, opponents to strong AI development point out other potential mistakes that could compromise our ability to control our creations. Bugs, or unforeseen mistakes in the software commands written by programmers, appear frequently in modern computer code. While harmless in some instances, a bug can have serious ramifications. For instance, a software bug caused a Mars orbiter to crash. The vast amount of software necessary for strong AI would also magnify the amount of bugs. Also, hackers could exploit bugs to alter the code in AI to serve ill purposes. In the worst case, the bug could undermine the safety mechanisms that programmers placed to protect humans, or create unexpected AI behavior. The code writing process thus needs tight regulation to avoid mistakes that could potentially lead to disaster. Strong AI poses a sizable danger and should be developed with the utmost caution. While I do not believe that creating strong AI will lead to certain extinction for humans, I do think its creation would profoundly alter life as we know it. There is no guarantee that AI could solve all problems at an acceptable cost. As we approach the creation of strong AI, it’s more important to be cautious and observant, rather than buying into one prediction or the other. Many people did not foresee the rise of the Internet. While the focus of much of the argument against strong AI has been focused on the AI, a mirror needs to be placed in front of humans. Having such an aid as a strong AI, a person with either good or ill intent could magnify his or her reach. The outcome depends on humankind’s ability to regulate itself as well as its creative process. We may not be able to stop the eventual creation of strong AI and even super intelligent AI, but we can implement policies and regulations to ensure safe development and avoid regrettable, irreversible outcomes. By Alexandra DeCandia

Edited By Timshawn Luh Monarch butterflies are an iconic American species. Found in all 50 states, these orange-and-black backyard visitors delight children with their delicacy and grace. They pass through our gardens each year, participants in an annual, multi-generational migration among the farthest undertaken by an insect species. Travelling south from Canada and the United States, eastern monarchs traverse over 3,000 miles to reach warmer climes in the Sierra Madre Mountains of Mexico. In the past two decades, North American monarch populations have plummeted. Once covering 20.97 hectares of overwintering habitat in 1996-1997, monarchs occupied a meager 0.67 ha in 2013-2014. This is a 97% decline in occupied land area. A staggering statistic, such losses are cause for concern. Some scientists and activists promote monarch butterfly protection under the United States Endangered Species Act. They argue that while species eradication is unlikely, we risk the loss of an incredible migratory event unparalleled on our continent. Before concrete conservation action can be implemented, it is crucial to ascertain the cause of these declines. According to a series of emails I received from Environment New York, “Monsanto is driving the monarch butterfly to the brink of extinction.” The subject merely reads: “Monsanto killing butterflies.” I found myself wondering “How?” but, more importantly, “Why?” The phrasing of these emails connoted a sense of malicious intent behind the actions of this controversial agricultural company. I imagined a group of nefarious individuals--executives, scientists, large-scale farmers—laughing maniacally as they ripped wings off butterflies amid a shower of money and herbicides pouring from the sky. This is hardly the reality of the situation. The real impetus behind Environment New York’s campaign against Monsanto rests in the reduction of common milkweed (a weed species commonly found in corn and soybean fields) as a direct result of herbicide use on genetically modified crops. Common milkweed is the preferred food source of monarch butterfly larvae. Migrating adults lay their eggs on plants interspersed throughout the Midwest every year. Farmers consider the plant a major pest species, however, as it reduces overall agricultural yields. They have always employed herbicides to decrease milkweed populations in their fields, but use of the most potent herbicide, glyphosate or RoundupTM(Monsanto), was previously limited due to its negative effects on crops. In the 1990s, Monsanto introduced genetically modified, glyphosate-tolerant corn and soybean plants to agricultural markets. By 2011, adoption of these crops reached 72% and 94%, respectively. Use of Roundup skyrocketed. Unsurprisingly, common milkweed populations crashed. Without common milkweed plants to feed their larvae, monarch butterflies were unable to adequately propagate their populations. The ever-increasing adoption of Monsanto’s Roundup ReadyTMcrops during the past two decades matched increasing instances of monarch declines almost perfectly. It is important to note that the loss of milkweed is not the only stressor currently affecting monarchs. Extreme weather events (notably temperature fluctuations and changes in precipitation) associated with climate change and the loss of overwintering habitat at the hands of illegal loggers are also implicated in monarch declines. The stressors work synergistically. Evaluation of each factor’s relative impact on declines of butterfly populations, however, revealed that milkweed loss remains the primary cause. It appears Monsanto iskilling butterflies. On March 31, 2015, Monsanto announced that it would contribute $3.6 million to the National Fish and Wildlife Foundation’s Monarch Butterfly Conservation Fund over the next three years. An additional $400,000 was included “to partner with and support the efforts of experts working to benefit monarch butterflies,” a companywide announcement read. An optimist may view this commitment as means to make amends – an attempt to right a wrong committed against butterflies. A cynic may see a carefully crafted public relations move that lacks long-term commitment to butterfly-friendly agricultural practice. Either way, Monsanto’s donation is a step in the right direction. Monarch butterflies can be saved, and individual citizens can absolutelyaid these efforts. To raise awareness, reach out to government officials, herbicide companies, and farmers to let them know you care about the fate of butterflies. To actively aid conservation, plant local milkweed species on your property to provide nursery habitat for migrating monarch larvae. We are not powerless in the fight to save monarchs, and it is a battle worth fighting. They are an iconic American species: a source of inspiration for writers, artists, scientists, and children exploring their own natural world for the first time. Don’t let them down; don’t let monarchs fall. In the fall of 2014, the members of the Columbia Science Review sought to continue their mission of promoting scientific awareness and literacy in ways other than the methods already in place: the biannual publication of the Columbia Science Review, the online blog, and frequent outreach/on-campus events. After many hours of hard work and planning, the CSR began a new video series called Spread Science. The Spread Science video series was formed with the intention of "spreading science" by showcasing some of the incredible research being done by current Columbia undergrads in both on- and off-campus laboratories. This is just one small step in our long-running mission to increase scientific awareness and literacy in the Columbia community and beyond, but we hope it will be an effective one nonetheless

. Check out the very first installments of the series below, featuring Columbia College undergrads Sophie Park (CC'16), studying follicle stem cells in fruit fly ovaries, and Tina Liu (CC'17), conducting climate change research at the Lamont-Doherty Earth Observatory! Subscribe to our new YouTube channelfor more videos and updates. [youtube https://www.youtube.com/watch?v=WwlEKnTInbE] [youtube https://www.youtube.com/watch?v=_7xz331ZBkc] By Tiago Palmisano

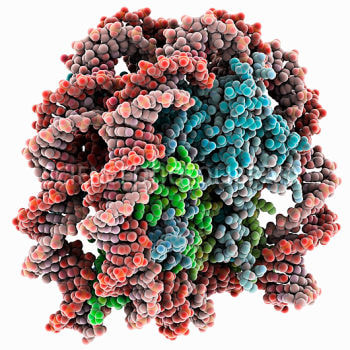

Edited By Bryce Harlan In the modern scientific community, it is common knowledge that deoxyribonucleic acid (DNA) carries our genetic information. Every organism reads its DNA like a set of intricate instructions and pass on some of these instructions to its children through a process known as inheritance. DNA is our biological code. Yet, the link between inheritance and DNA was not discovered until 1952 when Alfred Hershey and Martha Chase, two brilliant molecular biologists, performed a landmark research study. Before the Hershey-Chase Experiment, there was a debate about whether DNA or proteins carried our genetic information. In fact, most scientists thought that proteins were the stronger candidates at the time. While DNA consists of a fixed sugar-phosphate backbone and only four nucleotide bases abbreviated A, T, G and C, proteins consist of twenty different amino acid combinations, such as lysine or tyrosine. It made much more sense for our biological code to be made of twenty pieces rather than four, for the same reason that it’s easier to make more words with an alphabet of twenty different letters, as opposed to only using A, B, C and D. But after Hershey and Chase and decades of further research, it was accepted that DNA, not protein, was the only carrier of genetic information. However, a recent study may prove this 60-year-old assumption to be incomplete. Scientists at the University of Edinburgh have found that some biological information can be passed on across subsequent cell generations, regardless of DNA sequence. The paper was published in Scienceon April 3rd, and studies the role of histone proteins. The DNA within our cells is so long that it requires extensive folding, and specific proteins known as histones assist in the folding process. A group of eight histone proteins form a structure called a nucleosome, which the DNA strand wraps itself around. Covalent modification of histone molecules can affect the transcription of the associated DNA. In other words, certain chemicals can be added or subtracted from the histones, and this can have a significant effect on the cell’s ability to read and use the DNA. Although our understanding of histone modifications is not new, the University of Edinburgh study demonstrates that these modifications can be passed on, regardless of DNA sequence. The study focuses on the histones in a strain of yeast that controls its DNA similarly to human cells. The researchers introduced a chemical change known as methylation into a histone protein in the yeast, specifically H3K9me, mimicking the chemical modifications that occur naturally. The histone methylation successfully affected the yeast’s transcription of the DNA, making the organism unable to read and use certain parts of the biological code. More importantly, this introduced methylation was passed on to the next generation of yeast cells, demonstrating that DNA-independent histone modifications can be inherited. This new data forces us to reconsider the definition of genetic information. Histone modifications affect how the DNA is used within the cell, and therefore affect the characteristics of the organism. In this way, some of our characteristics may be partially controlled through protein modifications, instead of exclusively by the direct DNA sequence. In a way, the histones carry genetic information, since they can pass on instructions for how our DNA is used. This study shows us that proteins and DNA can influence inherited traits independently. Such a discovery possesses crucial information that can provide scientists with a more thorough understanding of gene expression in humans. Normally, influencing the traits that a mother or father would pass on to a child would require directly changing the DNA sequence. But the ability to modify histones instead would give doctors a potentially easier option for treating genetic diseases. This discovery could provide new insights in the field of genetic engineering, and lead to cheaper and more efficient research techniques. Regardless of its practical consequences, such a significant clarification in our understanding of the complex process of DNA transcription and inheritance is of monumental importance. Furthermore, it reminds us to question every assumption, especially in science. By Ruicong Jack Zhong

Edited by Timshawn Luh With global climate change becoming a pressing issue, it is now more important than ever to find sustainable sources of electrical energy. This past year, the US experienced wild winter weather with record-breaking snowstorms, and harsh weather is expected to continue and worsen. Electricity forms the basis of modern technology, but the generation of electricity has been unsustainable. Burning gas and coal emits greenhouse gases and is attributed to climate change. As a solution, scientists have turned to the immense power of nuclear fission. Once popular as the “energy source of the future,” nuclear power plants have slowly been phased out in favor of other, cleaner energy sources. The enormous amount of energy produced by nuclear fission, splitting heavy atomic nuclei into lighter nuclei, comes with costly drawbacks. For instance, the spent nuclear fuel remains radioactive for centuries, and it must be stored in special underground areas for safety. Furthermore, heavy atoms are extremely unstable, and controlling nuclear fission is very tricky. If the reaction occurs too quickly, the reactor essentially becomes an atomic bomb. The nuclear meltdowns at Three Mile Island and Chernobyl are tragic stories of the dangers of nuclear fission. As an alternative to nuclear fission, nuclear fusion now promises to be the energy source of the future. Used by stars to produce light, nuclear fusion involves combining light hydrogen nuclei with helium nuclei, as opposed to splitting heavy atomic nuclei into lighter nuclei. Interestingly, the energy produced by a single fusion reaction is less than that of a fission reaction. Yet, for the same mass of fuel used, fusion produces more energy than fission reactions. Hydrogen isotopes used in fusion are much lighter than the heavy atoms like uranium used in fission reactions. Fusion power offers many benefits over current sources of energy. The byproduct of fusion, helium nuclei, is relatively harmless. Better yet, the hydrogen isotopes used in fusion can be isolated from sea water. In 2010, a new catalyst was developed at UC Berkley to convert water into hydrogen. Further, fusion reactions are easier to control than fission reactions: fusion requires a steady input of energy and Compared to fission reactions, there is little risk of a meltdown or explosion. Finally, fusion power produces enough energy to be a feasible major power source, replacing unclean sources like gasoline, coal, or nuclear fission. In recent years, scientists achieved controlled ignition of fusion reactions. Put simply, ignition occurs when the reaction’s energy output is greater than the reaction’s activation energy. Because the positive charges of hydrogen nuclei repel each other, a huge amount of energy is needed to fuse the nuclei and reach ignition. This energy requirement has made reaching ignition extremely difficult. The first man-made device to achieve fusion ignition was the hydrogen bomb Ivy Mike, made in 1952. In 2013, the National Ignition Facility (NIF) in California achieved controlled fusion ignition by using a series of ultra-powerful lasers to compress and ignite a fuel pellet. This method is used in the International Thermonuclear Experimental Reactor (ITER) project, which hopes to build a model commercially viable fusion reactor by 2019. ITER uses one of the many other proposed methods to achieve fusion; it runs a current through plasma to heat it and confines the plasma with magnetic fields. Other prominent developments in fusion, like Lockheed Martin’s high beta fusion reactor, also promise to achieve ignition within a few decades. While fusion could replace unclean sources of power, fusion power can go hand in hand with other clean sources of energy. In fact, they operate in different locations and can work together to generate power. To illustrate, fusion reactors require a large area of land. Hydroelectric and tidal power plants take advantage of moving waters and would be situated on the coast. Wind power can operate in farmlands, oceans, and plains because each windmill occupies little space, and solar panels could be deployed in urban areas and deserts. If fusion power is realized, it would completely alter political landscape of Earth. Many countries would switch to fusion power and no longer be dependent on fossil fuels for energy. With the introduction of affordable high-quality electric vehicles, consumers have even less reason to continue using gasoline and diesel powered vehicles. For example, the Tesla Model S and Model 3 would be able to travel over 250 miles on a single charge, and they can charge in less than 1 hour at Supercharging stations. Decreased use of fossil fuels would significantly diminish the political power of oil companies and oil-rich countries in the Middle East. It would also significantly decrease greenhouse gas emissions and their negative effects. Ultimately, fusion power will lead to a new era of productivity and sustainability. |

Categories

All

Archives

April 2024

|